Adding Shared LVM Storage

Overview

You can store virtual server data remotely to gain advantages over local storage. SolusVM supports Shared LVM Storage using iSCSI targets for storing data.

This document explains how to add Shared LVM Storage to your SolusVM cluster.

Prerequisites

- You must have configured iSCSI targets that will provide the disk space.

- Each iSCSI target must be reachable over the network from all Compute Resources that will use it for Shared LVM Storage.

Known Issues and Limitations

- SolusVM does not support Virtuozzo Hybrid Server/OpenVZ 7 because of their outdated tooling.

- The SolusVM Shared LVM Storage implementation does not support Thin LVM or snapshots.

Adding the Storage

Add Shared LVM Storage in three parts:

- Configure an iSCSI initiator on every Compute Resource that will use Shared LVM Storage.

- Configure LVM and create volume groups.

- Add Shared LVM Storage in SolusVM.

Note:

- These instructions provide general guidance, not the only way to configure Shared LVM Storage. Experienced Linux administrators can fine-tune the parameters.

- You should be comfortable using the command line and editing configuration files by hand.

- The specific steps may vary slightly depending on the OS a particular Compute Resource runs.

Configuring an iSCSI Initiator

To configure an iSCSI initiator on a Compute Resource:

- Install the necessary packages:

- (On Debian or Ubuntu)

apt install open-iscsi multipath-tools lvm2-lockd sanlock - (On AlmaLinux)

yum install iscsi-initiator-utils device-mapper-multipath lvm2-lockd sanlock

- (On Debian or Ubuntu)

Note:

We strongly recommend sanlock for locking and cannot guarantee that using another lock manager will not cause issues.

Start, enable, and configure the

iscsidservice:systemctl start iscsid systemctl enable iscsid iscsiadm -m discovery -t sendtargets -p <IP address[:port]> iscsiadm -m node -L all iscsiadm -m node --op update -n node.startup -v automaticSubstitute the IP address of the iSCSI target’s portal for

<IP address>. If the[:port]parameter is omitted, the system uses the default3260value.Edit the

/etc/lvm/lvm.conffile and set theuse_lvmlockdparameter to1. The line must look like this:use_lvmlockd = 1Edit the

/etc/lvm/lvmlocal.conffile and set thehost_idparameter. The parameter must be a number between 1 and 2000, and must be unique for every Compute Resource. For example, you can usehost_id = 1for one Compute Resource,host_id = 2for another, and so on.Start, enable, and configure the

multipathdservice:systemctl start multipathd systemctl enable multipathdEdit the

/etc/multipath.conffile by adding the following lines to it. If the file does not exist, create it first and give it644permissions:defaults { user_friendly_names yes }Find the wwid of the device corresponding to the iSCSI initiator and add it to the wwids file:

a. List the block devices:

lsblkb. Get the device’s WWID. Substitute the name of the device for

<device name>, for example,/dev/sda:/lib/udev/scsi_id -g -u -d /dev/<device name>c. Add the WWID to multipath. Substitute the output of the previous command (an alphanumeric string) for

<WWID>, for example,3600a0980383234526f2b595539355537:multipath -a <WWID>d. Reconfigure multipath:

multipath -rRestart the

multipathdservice:systemctl restart multipathdVerify that the

multipathdservice is configured properly:multipath -llThe output should look like this:

3600a0980383234526f2b595539355537 dm-1 NETAPP,LUN C-Mode size=300G features='3 queue_if_no_path pg_init_retries 50' hwhandler='1 alua' wp=rw |-+- policy='service-time 0' prio=50 status=active `- 7:0:0:0 sda 8:16 active ready runningStart and enable locks:

systemctl start lvmlockd systemctl enable lvmlockd systemctl start sanlock systemctl enable sanlock

You configured the iSCSI initiator for your iSCSI target on a single Compute Resource. Repeat this procedure on every Compute Resource that will offer Shared LVM Storage using that specific iSCSI target. Make sure to give each Compute Resource a unique host_id parameter during step 4.

Once you configure the iSCSI initiator on every Compute Resource, you need to configure LVM and create volume groups.

Configuring LVM and Creating a Volume Group

To configure LVM and create a volume group:

On one Compute Resource only, run the following command. Substitute a name for the volume group for

<vg_name>and the wwid of the device corresponding to the iSCSI initiator for<wwid>, for example,vgcreate --shared shared_vg /dev/mapper/3600a0980383234526f2b595539355537:vgcreate --shared <vg_name> /dev/mapper/<wwid>On every Compute Resource you configured an iSCSI initiator on, run the following command:

vgchange --lock-start(AlmaLinux) On every Compute Resource you configured an iSCSI initiator on, run the following command:

vgimportdevices --all

Every Compute Resource you performed steps 2 and 3 on can now use the volume group. You can verify this by running the vgs command on those Compute Resources. The output should look like this:

VG #PV #LV #SN Attr VSize VFree

shared_vg 1 3 0 wz--ns <300.00g <296.00gThe final step is to add the Shared LVM Storage in SolusVM.

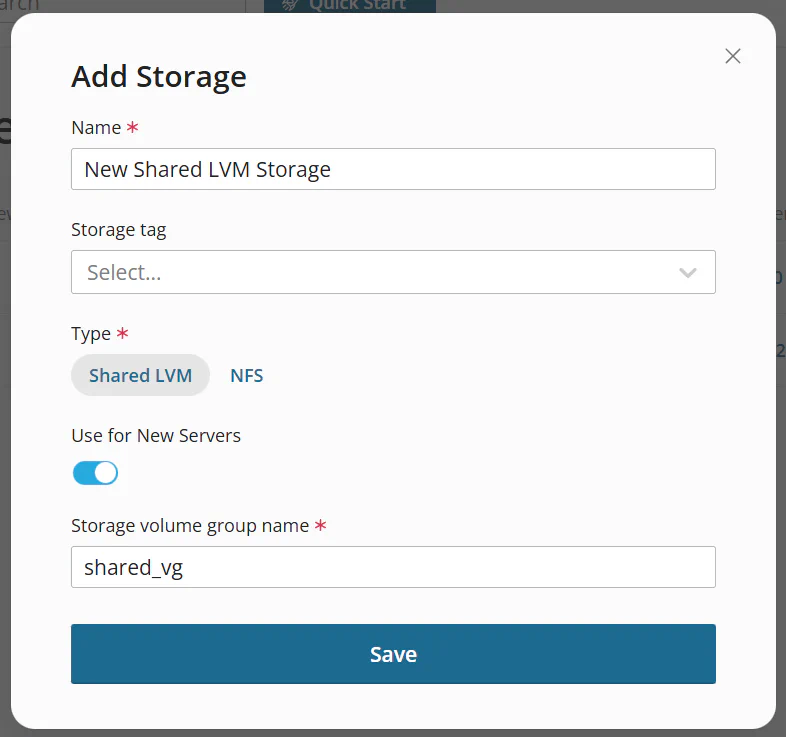

Adding Shared LVM Storage

To add Shared LVM Storage:

Go to Storage, and then click Add Storage.

Give your storage a name.

(Optional) Select a Storage tag.

Set the Type parameter to Shared LVM.

Specify the volume group name you set when creating the volume group.

Click Save.

You added Shared LVM Storage. You can now assign it to Compute Resources.